Novel AI Leaked Image Story Creation Tool Leaked

New set of Novel AI Diffusion Image Generation Samplers- Use powerful image models to depict characters and moments from your stories, with the leading Anime Art AI and other AI models.Novel AI Leaked Image Story Creation Tool Leaked code are here,

Novel AI Leaked Image Story Creation Tool Leaked

NovelAI aka NAI Diffusion is an anime image generator that was released in October 2022.

Here’s our full review of the image generator on the official website. The tool requires a monthly subscription to use and the cheapest plan is $10.

You can however, run NovelAI for free on your own computer and get the exact same outputs as the paid version:

The installation process takes 10 minutes, minus download times. You’ll need around 10GB of free space on your hard drive.

Common points of confusion:

- People refer to both the official website and the local model as NAI Diffusion or NovelAI.

- The local model is sometimes referred to by its filename, animefull, or animefull_final

To run the local model, you will need to download a User Interface to go with it.

In this guide, we’ll show you how to download and run the NovelAI/NAI Diffusion model with the AUTOMATIC1111 user interface.

Let’s get started!

Installation

Before proceeding with installation, here are the recommended specs:

- 16GB RAM

- NVIDIA (GTX 7xx or newer) GPU with at least 2GB VRAM (AMD GPU will work, but NVIDIA is recommended)

- Linux or Windows 7/8/10/11 or Mac M1/M2 (Apple Silicon)

- 10GB disk space (includes models)

1. Download the model file

The model is all the stuff the AI has been trained on and is capable of generating. Model files end in the extension ‘.ckpt‘ or ‘.safetensors‘.

As mentioned, we’ll be downloading animefull (which is just what people call the NovelAI/NAI Diffusion model).

Note: Right now, there are much better anime models out there. You can try them while following the rest of this guide, the model file is interchangable.

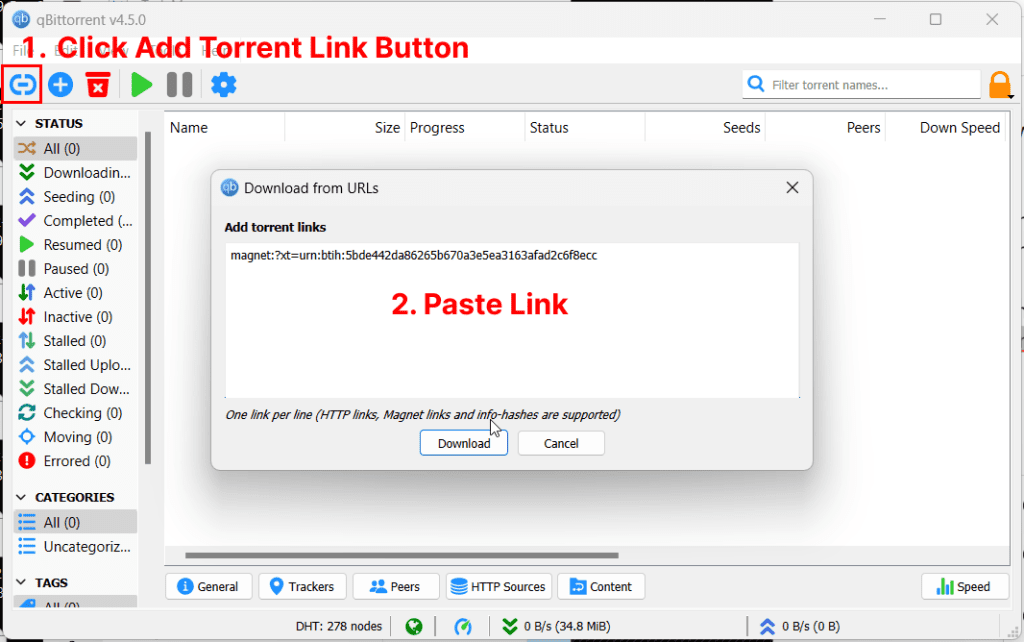

Download a torrent client if you don’t have one already. I recommend qBittorrent (works on Windows/macOS/linux).

Add the following torrent magnet link:

magnet:?xt=urn:btih:5bde442da86265b670a3e5ea3163afad2c6f8ecc

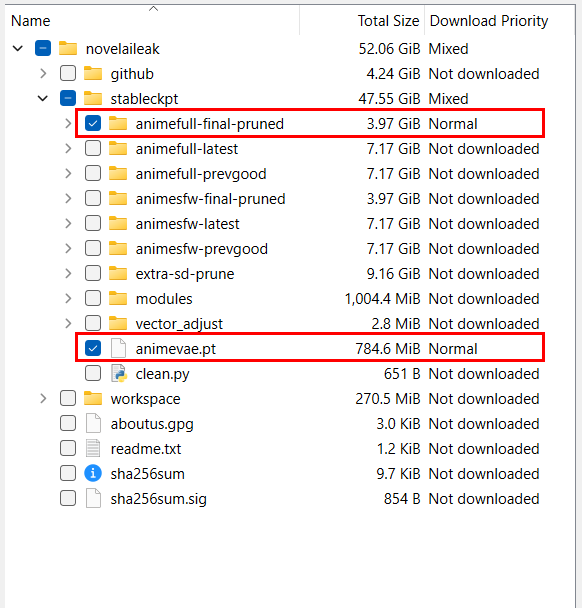

Deselect everything except for the the subfolder “animefull-final-pruned” and the file “animevae.pt” (both are located in the /stableckpt folder)

You will notice there are pruned models and unpruned models. A pruned model is just a compressed model. Most people use the pruned one.

While the model is downloading in the background you can move on to the next step.

2. Download the Web UI

This is the user interface you will use to run the generations.

The most popular user interface is AUTOMATIC1111’s Stable Diffusion WebUI.

You’ll notice it’s called the Stable Diffusion interface.

Stable Diffusion is the name of the official base models published by StabilityAI and its partners, but it is used colloquially to refer to any of the custom models which people created using these base models.

Here are the installation instructions for the WebUI depending on your platform. They will open in new tabs, so you can come back to this guide after you have completed the WebUI installation:

- Installation for Windows (NVIDIA GPU)

- Installation for Windows (AMD GPU)

- Installation for Apple Silicon (Mac M1/M2)

3. Place the Model in the Web UI folder

After your model finishes downloading, go to the folder “novelaileak/stableckpt"

You want the following files:

model.ckpt in folder stableckpt/animefull-final-pruned- This is the model file. This is the only one you actually need.

config.yamlin folderstableckpt/animefull-final-pruned- Configuration file

animevae.ptin folderstableckpt- This is the Variable Auto Encoder (VAE). Image generation is done in a “compressed” way, and the VAE takes the compressed results and turn them into full sized images. The end result is more vibrant colored images with better details.

Copy these 3 files into the folder stable-diffusion-webui/models/Stable-diffusion.

(stable-diffusion-webui is the containing folder of the Web UI you downloaded in the previous step)

Rename the files like so:

model.ckpt->nai.ckptconfig.yaml->nai.yamlanimevae.pt->nai.vae.pt(write it exactly like this)

You can actually name these files whatever you want, as long as the name before the first “.” is the same.

Since you will be placing all future models into this folder choose a descriptive name that helps you remember what this model is.

4. Start the WebUI

- Windows: double-click

webui-user.bat(Windows Batch File) to start - Mac: run the command

./webui.shin terminal to start - Linux: run the command

webui-user.shin terminal to start

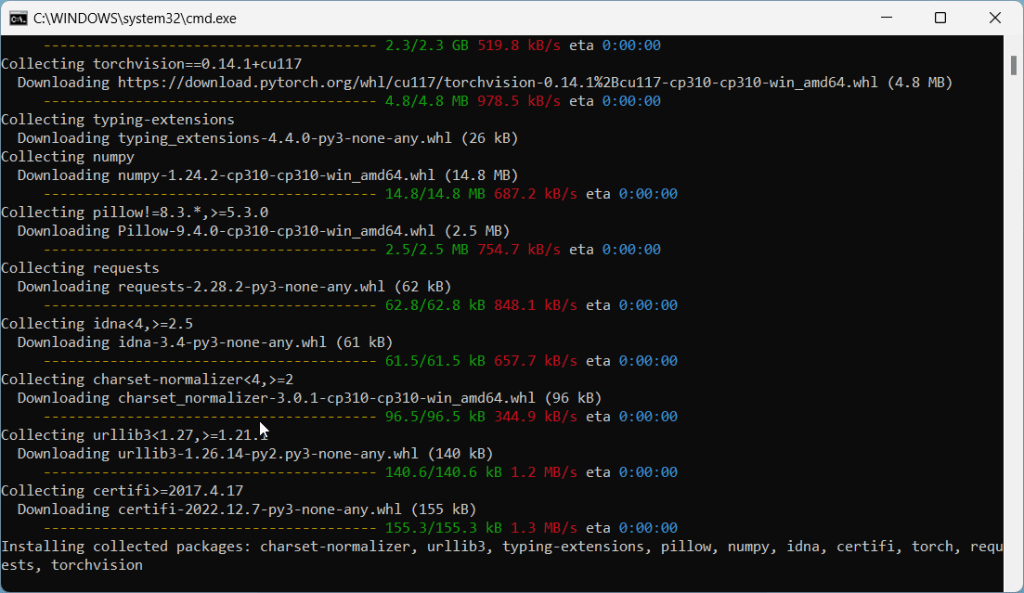

On the first run, the WebUI will download and install some additional modules. This may take a few minutes.

Common issues at this step:

RuntimeError: Couldn’t install torch.

Fix: Make you you have Python 3.10.x installed. Type python --version in your Command Prompt. If you have an older version of Python, you will need to uninstall it and re-install it from the Python website.

Fix #2: Your Python version is correct but you still get the same error? Delete the venv directory in stable-diffusion-webui and re-run webui-user.bat

RuntimeError: Cannot add middleware after an application has started

Fix: Go into your stable-diffusion-webui folder, right click -> Open Terminal. Copy and paste this line into terminal and press enter:.\venv\Scripts\python.exe -m pip install --upgrade fastapi==0.90.1Copy

See more common issues in the troubleshooting section.

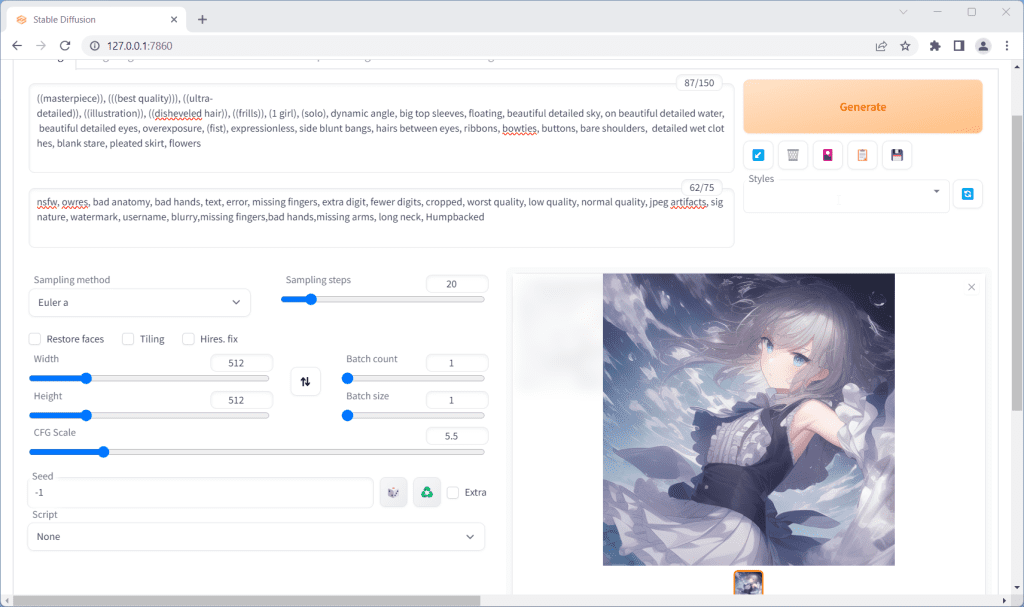

Open WebUI in Browser

In my command prompt, I get the success message Running on local URL: http://127.0.0.1:7860

So I would open my web browser and go to the following address: http://127.0.0.1:7860.

Everything else works but not the web address

- Make sure you have not typed “

https://” by accident, the address should start with “http://“ - In this example my web address is

http://127.0.0.1:7860. Yours might be different. Please read the success message in your Command Prompt carefully for the correct web address.

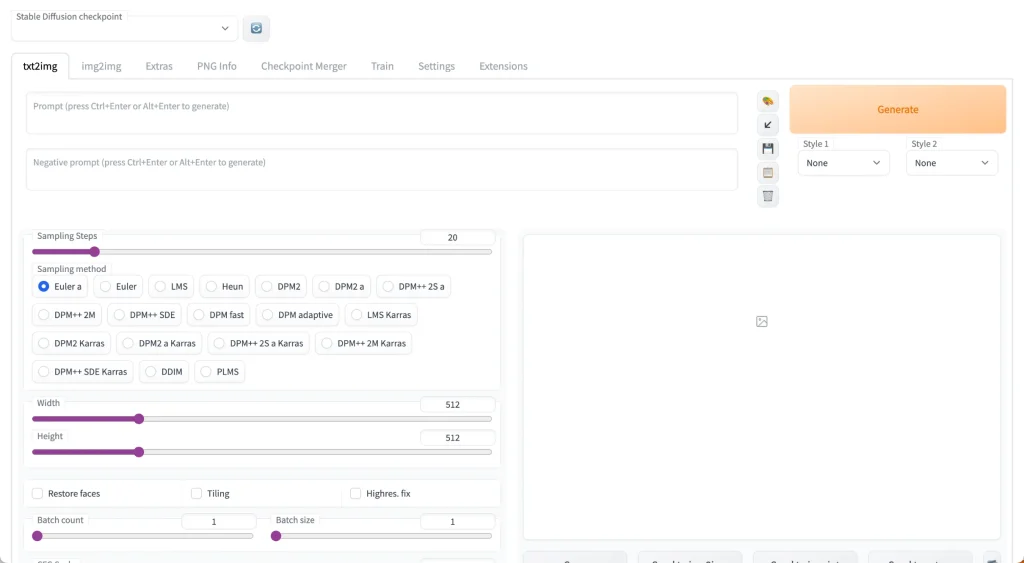

You’ll notice at the top there’s a toggle called “Stable Diffusion Checkpoint”.

You can use this to switch to any model you have placed in the stable-diffusion-webui/models/Stable-diffusion folder. We’ll choose the model we renamed nai.ckpt earlier.

If you put the model in the folder after you start the WebUI, you will need to restart the WebUI to see the model.

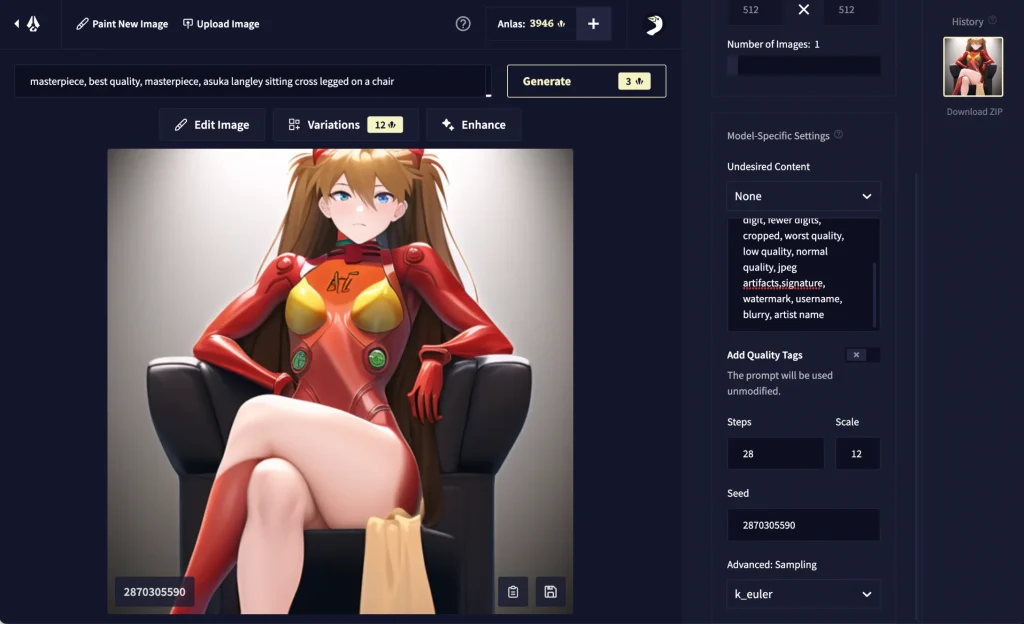

5. Hello Asuka

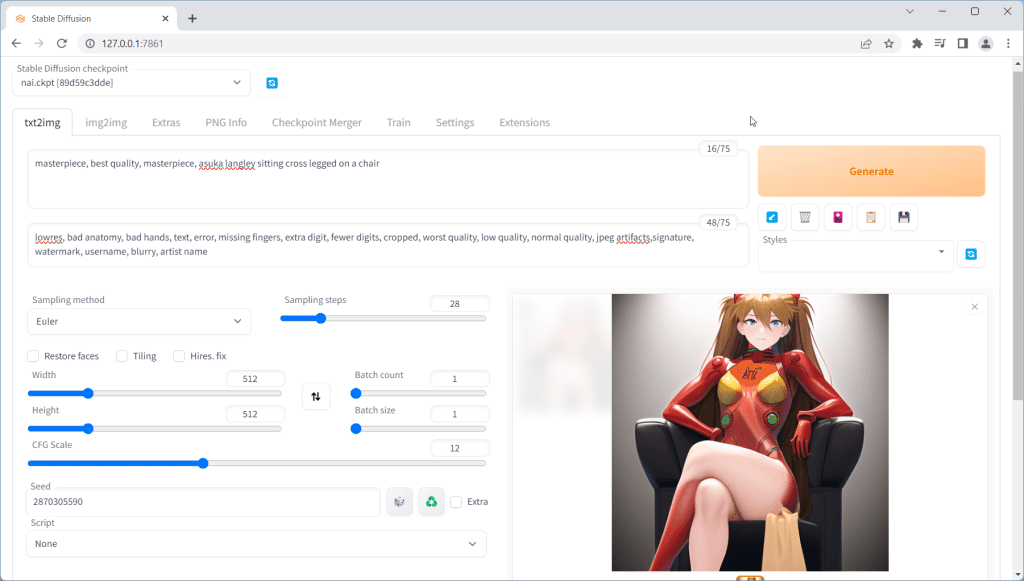

Hello Asuka is a calibration test used to verify that you have everything installed and configured correctly.

The paid NovelAI will generate this image of Asuka from Evangelion with 95%-100% accuracy when you use the right settings:

If you can recreate this image with your fresh Stable Diffusion & NAI install, then you are successfully emulating NovelAI.

Go to the txt2img tab. Input the following values exactly:

- Prompt:

masterpiece, best quality, masterpiece, asuka langley sitting cross legged on a chair - Negative prompt:

lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name - Sampling Steps:

28 - Sampling Method:

Euler - Width:

512 - Height:

512 - CFG Scale:

12 - Seed:

2870305590 - Then, go to the

Settingstab. Click on theStable Diffusionsection and look for the slider labeledClip skip. Bring it to2. Then clickApply Settingsat the top.

Click the big Generate button in the upper right corner, and wait for the image to complete:

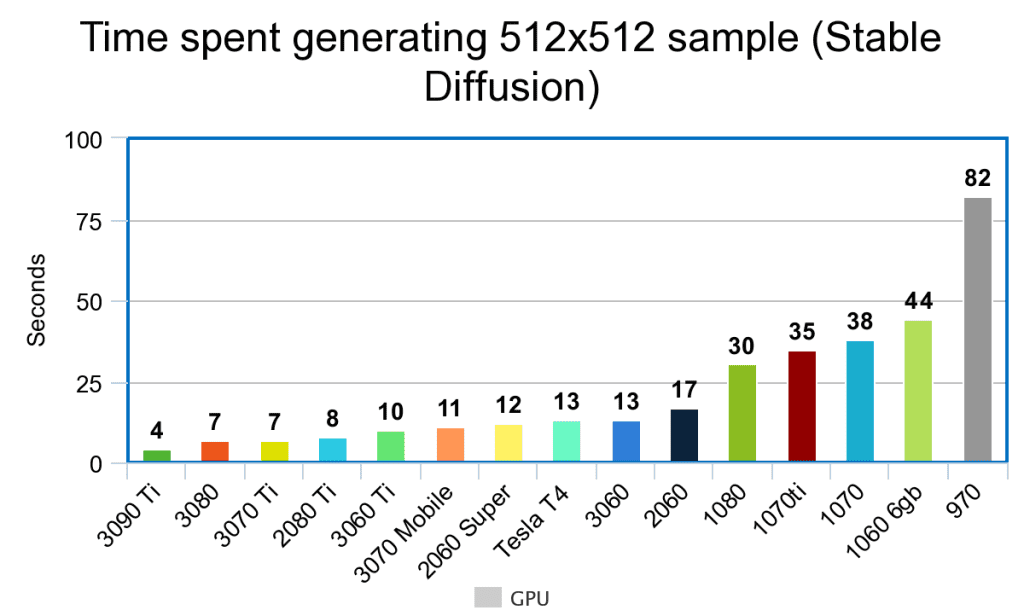

How long does it take to generate an image?

Here’s a rough estimate for image generation time depending on your GPU:

You can expect these benchmark times to go down as the developers keep on optimising the software for faster and faster generations.

Need a better GPU to generate images faster?

Check out this handy GPU guide.

My Asuka is messed up!

You want to get a result that is almost exactly the same as the target Asuka. “Close enough” probably means something is wrong.

Here are some reasons why you might not be getting the correct Asuka:

- You are not using the VAE (

.vae.pt) file and the configuration (.yaml) file. Check Step 3 again. - Make sure you are using

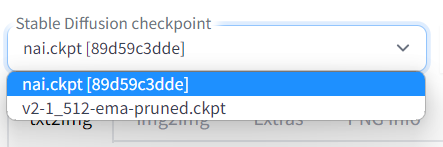

Eulerfor the sampler and notEulera - Make sure you are using the correct model. Check the

Stable Diffusion checkpointdropdown on the top. If your model is correct, the number[89d59c3dde]or[925997e9]should appear after the model name. - Verify that you have entered all the settings correctly: Prompt (did you miss copying a letter?), Negative Prompt, Steps, CFG scale, Seed, Clip Skip in the settings etc.

- You are running Stable Diffusion on your CPU. Not only is this very slow, you will also not be able to pass the Asuka test.

- You have a NVIDIA GTX 1050ti. This specific GPU is known not to be able complete the test.

- You are using a Mac. Macs are known to have trouble completing the test.

Don’t fret if your computer cannot complete the Asuka test. You can still generate high-quality images (it just means you cannot emulate NovelAI 1:1).

NovelAI Emulation

To show you how close the emulation actually is, I’ve input the exact Asuka test settings into the official NovelAI website:

Here are the results compared:

You’ll notice the only difference is the sharpness of the separation of the red leg. This difference is acceptable because it is caused by launching the WebUI with custom parameters (–medvram, –lowvram, or –no-half ).

You can use the same settings you did in the Asuka test to emulate any NovelAI image. That is:

- Set the sampler to Euler (Not Euler A)

- Use 28 Steps

- Set CFG Scale to 11

- Use

masterpiece, best qualityat the beginning of all prompts - Use

nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, artist nameas the negative prompt - In the Settings tab click Stable Diffusion sub-section, and change the Clip skip slider to 2 and then click “

Apply settings“

Prompting

Basics

- AUTOMATIC1111 does not save your image history! Save your favorite images or lose them forever.

- Begin your prompts with

masterpiece, best quality(the NovelAI website does this by default). - Start with the following in the Negative prompts:

lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, artist name - Use

-1in the seed field to randomize it, or specify a seed to ensure consistency across generations.

I’ve written some detailed prompt techniques to improve your image results.

If you are using this guide, replace {} with (). Stable Diffusion uses () while the NovelAI website uses {}.

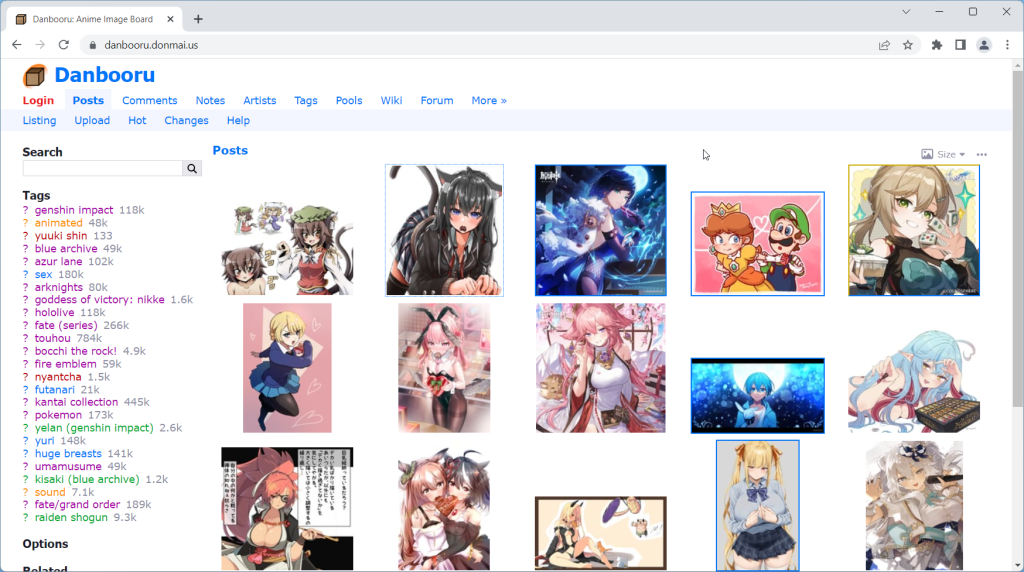

Danbooru Tags

The fastest way to improve your prompting is by checking out Danbooru tag groups.

For the unacquainted, Danbooru is the largest anime imageboard (as well as one of the top 1000 websites in the world by traffic).

All anime models use Danbooru for all/part of their training data.

The reason Danbooru makes such a good dataset for AI/ML models is its robust tagging system. Every single image has tags that summarize everything in the image, from the major categories (artist, character, fandom) to the tiniest of details (‘feet out of frame‘, ‘holding food‘, ‘purple bowtie‘, etc).

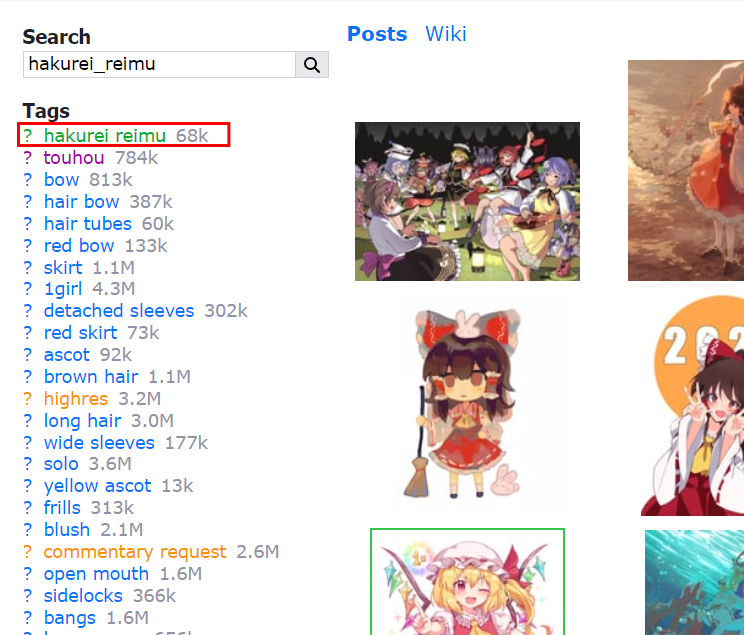

If a Danbooru tag has 1K+ images, there is a high likelihood that NovelAI ‘knows’ what it is. Check individual tags to make sure they have enough images.

This explains why NovelAI can’t generate some ‘popular’ characters.

The characters simply don’t have enough fanart on Danbooru.

masterpiece, best quality, hakurei reimuCopy

masterpiece, best quality, hatsune mikuCopy

Tip: For characters, you’ll get the best results by writing character names exactly as they are tagged in Danbooru.

Prompting Guides

Here are the best resources I’ve found so far (email me if you have something you’d like to add to this list):

P1atdev

Most comprehensive NovelAI prompt library for all character features. Only in Japanese.

Codex of Quintessence

Gigantic Chinese tome of prompt knowledge, divided into multiple scrolls.

Models to try next

NovelAI/NAI Diffusion is the model that started anime generation. It’s a culturally and historically significant model.

Many models have been released since, that use NAI as a base and greatly improve its quality and versatility. Here are some recommendations:

High quality model created by fine-tuning NAI. The most popular anime model, and for good reason. Try this one next.

Collection of merged models (NAI + Anything + Gape) BloodOrangeMix is popular.

Stable Diffusion + Danbooru. One of the better known models.

Detailed anime model.

For more models, check out this full list of anime models.

Next Steps

Models and new features for the WebUI are coming out every day.

Try the rest of our guides in the Stable Diffusion for Beginners series:

Stable Diffusion for Beginners

Part 1: Getting Started: Overview and Installation

Part 2: Stable Diffusion Prompts Guide

Part 3: Stable Diffusion Settings Guide

Part 4: LoRAs

Part 5: Embeddings/Textual Inversions

Part 6: Inpainting

Part 7: Animation

Troubleshooting

RuntimeError: Couldn’t install torch.

Fix: Make you you have Python 3.10.x installed. Type python --version in your Command Prompt. If you have an older version of Python, you will need to uninstall it and re-install it from the Python website.

Fix #2: Your Python version is correct but you still get the same error? Delete the venv directory in stable-diffusion-webui and re-run webui-user.bat

RuntimeError: Cannot add middleware after an application has started

.png)

.png)

.jpeg)

.png)